What is AI Token Pricing?

AI token pricing is a consumption-based (or "Usage based") model where customers pay for AI services based on the number of tokens processed. Very frequently in LLMs, token is a unit of text, roughly 3/4 of a word in English, that AI models use to measure input and output. Instead of paying a flat monthly fee or a seat-based fee, customers pay proportionally to what they consume.

This model emerged because AI workloads are fundamentally different from traditional SaaS. A seat-based CRM costs the same to serve whether a rep logs in once or a thousand times. An AI model costs real money every time it runs inference. Token pricing passes that variable cost structure through to the customer.

What are AI tokens and how are they priced?

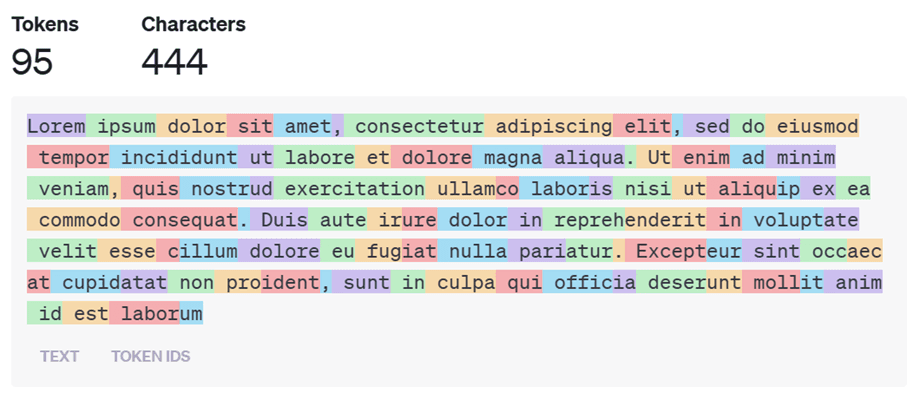

Tokens are sub-word fragments that language models use to process text. The word "monetization" might be split into three tokens ("mon", "etiz", "ation"), while common words like "the" are typically a single token. Different providers use different tokenizers, so the same text can produce different token counts across platforms.

(Image from Understanding AI Tokens and Their Importance)

Pricing is set per million tokens (MTok), with separate rates for input tokens (your prompt) and output tokens (the model's response). Output tokens are almost always more expensive, typically 3-5x the input price, because generating text requires more compute than reading it.

A simple API call works like this: you send a prompt (input tokens), the model processes it and generates a response (output tokens), and you're billed for both. The formula is straightforward:

Cost = (input tokens / 1M × input price) + (output tokens / 1M × output price)

Where it gets complicated: different models, different modalities (text, image, video, code), and different processing modes (standard, batch, cached) all carry different rates. A simple text completion is cheap. A multi-modal request with image analysis and long-form reasoning output is expensive.

AI token pricing comparison: how do major providers compare?

Pricing varies dramatically across providers. As of March 2026, here's how the major players stack up on their flagship and budget models:

Flagship models (highest capability)

Provider | Model | Input (per 1M tokens) | Output (per 1M tokens) | Context window | Notes |

|---|---|---|---|---|---|

OpenAI | GPT-5.4 | $2.50 | $10.00 | 1M+ | Newest flagship; cached input at ~$0.25 |

OpenAI | GPT-5.2 | $1.75 | $14.00 | 1M+ | Previous flagship; cached input at ~$0.175 |

Anthropic | Claude Opus 4.6 | $5.00 | $25.00 | 200K (1M beta) | Fast mode available at 6x rates |

Anthropic | Claude Sonnet 4.6 | $3.00 | $15.00 | 200K (1M beta) | Long-context: $6/$22.50 over 200K input |

Gemini 3.1 Pro | $2.00 | $12.00 | 1M+ | Latest generation | |

xAI | Grok 3 | $3.00 | $15.00 | 131K | Integrated with X platform data |

DeepSeek | V3.2 | $0.28 | $0.42 | 128K | Cache hits at $0.028 (90% savings) |

Budget and lightweight models

Provider | Model | Input (per 1M tokens) | Output (per 1M tokens) | Context window | Best for |

|---|---|---|---|---|---|

OpenAI | GPT-5 Mini | $0.25 | $2.00 | 128K+ | Routing, classification, simple tasks |

OpenAI | GPT-5 Nano | $0.05 | $0.40 | 128K | Highest volume, lowest cost |

Anthropic | Claude Haiku 4.5 | $1.00 | $5.00 | 200K | Fast responses, high-volume apps |

Gemini 2.5 Flash | $0.15 | $0.60 | 1M | Long-context on a budget | |

Gemini 2.0 Flash-Lite | $0.075 | $0.30 | 1M | Cheapest mainstream option | |

xAI | Grok 4.1 Fast | $0.20 | $0.50 | 2M | Largest context window available |

DeepSeek | R1 | $0.55 | $2.19 | 128K | Reasoning at budget pricing |

Prices from official provider documentation as of March 2026. Token pricing changes frequently. Always verify current rates before committing!

The spread is quite broad. Running the same 1M-token workload on Claude Opus 4.6 costs roughly 60x more than running it on DeepSeek V3.2. That doesn't mean the cheaper model is the right choice. Capability, reliability, safety, latency, and enterprise support all factor in. But the cost differential explains why model routing, sending simple tasks to cheap models and only escalating complex ones, has become standard practice.

What factors influence the cost per token for large language models?

Token pricing isn't arbitrary. Several structural factors determine what providers charge and what you end up paying:

Factor | How it affects pricing | Example |

|---|---|---|

Model size and capability | Larger, more capable models cost more to run | GPT-5.2 Pro at $21/$168 vs. GPT-5 Nano at $0.05/$0.40 |

Input vs. output | Output tokens cost 3-8x more because generation is compute-intensive | Claude Opus 4.6: $5 input vs. $25 output (5x ratio) |

Prompt caching | Repeated prompts can be cached for 50-90% savings | DeepSeek cache hits: $0.028 vs. $0.28 (90% discount) |

Batch vs. real-time | Asynchronous processing (24hr window) costs ~50% less | Anthropic Batch API: Sonnet 4.6 drops to $1.50/$7.50 |

Context length | Longer contexts can trigger premium pricing tiers | Claude Sonnet 4.6: $3/$15 under 200K, $6/$22.50 over 200K |

Modality | Image, audio, and video processing cost more than text | OpenAI image generation priced per image, not per token |

Processing tier | Priority/fast modes charge premiums for lower latency | Claude Opus 4.6 fast mode: 6x standard rates |

Volume commitments | Enterprise agreements lower per-token rates | Custom pricing available from all major providers at scale |

GPU costs and competition | Infrastructure improvements and competition drive prices down | Model costs have dropped roughly 10x every 18 months |

The most impactful lever for most teams is model selection. Sending every request to a flagship model when 70-80% of tasks could be handled by a lightweight model is the most common source of overspending. Teams that implement intelligent routing typically cut API costs by 50-70% without noticeable quality loss.

The translation problem: tokens vs. value

Token pricing creates a transparency challenge when you're building products for non-technical buyers. Customers don't think in tokens. They think in tasks.

A product manager doesn't ask "how many tokens will this cost?" They ask "can I summarize 50 documents a month?" A sales leader doesn't budget in millions of tokens. They budget in pipeline generated or deals closed.

This gap between how AI is metered (tokens) and how value is perceived (outcomes) is why most customer-facing AI products don't expose raw token pricing directly. Instead, they translate tokens into something the buyer understands.

Translation layer | How it works | Example companies |

|---|---|---|

Credits | Tokens abstracted into a proprietary unit. Different actions consume different credit amounts | Clay, ElevenLabs, Descript |

Workflow units | Pricing expressed per task completed, not per token | "Per document analyzed," "per meeting transcribed" |

Bundled into seats | AI usage included in a per-user fee with usage limits | Notion AI, GitHub Copilot |

Tiered usage caps | Flat fee includes X usage per month, per-unit charges beyond | Cursor, ChatGPT Plus/Teams |

Outcome-based | Price tied to a measurable result | Intercom Fin: $0.99 per resolved ticket |

Each of these is a packaging decision on top of the underlying token economics. The AI provider still pays per token underneath. The question is how that cost gets expressed to the end customer, and whether your billing infrastructure can handle the translation.

Learn more about credit-based pricing in our deep dive on credit architecture.

Where token pricing fits in the pricing model landscape

Token pricing sits at the most granular end of the pricing spectrum. It works well for infrastructure buyers who want fine-grained control. It works less well for business buyers who need predictability.

Model | Unit of measure | Cost predictability | Value alignment | Who it works for |

|---|---|---|---|---|

User count | High | Low (AI breaks the user-value link) | Simple SaaS, collaboration tools | |

Token-based | Raw tokens processed | Low (hard to forecast) | Medium (tracks usage, not outcomes) | API products, developer tools |

Credit-based | Abstracted units | Medium | Medium-High (maps to actions) | AI products with multiple resource types |

Per-workflow | Tasks completed | High | High | Vertical AI with bounded task complexity |

Outcome-based | Business result | High | Highest | Products with clear, attributable results |

Most companies that start with raw token pricing eventually layer an abstraction on top. Credits, bundled tiers, or workflow-based pricing give customers the predictability they need while preserving margin awareness underneath.

The economics: why token pricing changes the game

Token pricing forces margin awareness in a way that seat-based SaaS never did.

SaaS economics | AI token economics | |

|---|---|---|

Cost per unit | Near-zero marginal cost per user | Real, variable cost per inference |

Underpricing risk | Growth tactic (land and expand) | Margin killer (losses compound with usage) |

Heavy users | Cost the same to serve | Can be loss-making at flat rates |

Expansion revenue | Seat growth = pure revenue | Usage growth = revenue AND cost growth |

Typical gross margin | 70-85% | 30-60% depending on model and workload |

Some AI companies have found their top 5% of users consuming 75% of total compute costs while paying the same flat fee as everyone else. Token pricing, or a derivative of it, is one way to fix that misalignment.

What to watch for

Token pricing has several known failure modes that show up as companies scale.

Unpredictable bills. Customers who can't forecast spend get nervous. CFOs don't approve open-ended consumption commitments without guardrails. This is why committed-spend models (annual commitments with token drawdown) are becoming more common than pure pay-as-you-go.

Price compression. When you price on tokens, you're pricing on a commodity. Model costs have been dropping roughly 10x every 18 months. Customers expect those savings to pass through. Pure token pricing becomes a race to the bottom unless you layer value on top.

Billing complexity. Multiple models, multiple modalities, input vs. output pricing, cached vs. uncached tokens, fine-tuned model surcharges, batch vs. real-time rates. The permutations multiply fast. Your billing system needs to handle this granularity without requiring engineering work for every pricing change.

Revenue recognition. Prepaid token balances are liabilities until consumed. Expired tokens need proper accounting treatment. Companies that ignore this early build ad-hoc balance logic that doesn't map to ASC 606 or IFRS. This becomes painful during due diligence, M&A, or audit preparation.

The stranded credits problem. When token allocations are locked to individual users rather than shared across an organization, you get artificial "breakage." Power users hit limits while casual users sit on unused balances. This erodes trust and accelerates churn. The better approach: organization-level pools with per-user guardrails.

Token pricing and billing infrastructure

Token pricing sounds simple in theory. In practice, it requires infrastructure that most billing systems weren't designed for.

You need real-time metering that ingests usage events at scale. You need flexible rate cards that map different token types to different prices without code changes. You need balance management for prepaid models. You need transparency tools so customers can track consumption. And you need all of this to feed into invoicing and revenue recognition.

Most companies start by building this on top of Stripe or a homegrown system. It works until it doesn't. Usually around the time you're managing multiple models, multiple customer segments with different rates, or enterprise contracts with committed-spend structures layered on top of token consumption.

That's the billing v1 to billing v2 transition. Not because the first system was bad, but because the pricing model outgrew the infrastructure underneath it.

Learn more about this transition in our post on hybrid pricing and why most companies end up combining seats, usage, and credits as they scale.

Looking to implement token-based or hybrid pricing without building metering, billing, and revenue recognition from scratch? Talk to one of our billing experts.

Ready for billing v2?

Solvimon is monetization infrastructure for companies that have outgrown billing v1. One system, entire lifecycle, built by the team that did this at Adyen.

Advance Billing

AI Agent Pricing

AI Token Pricing

AI-Led Growth

AISP

ASC 606

Billing Cycle

Billing Engine

Consolidated Billing

Contribution Margin-Based Pricing

Cost Plus Pricing

CPQ

Credit-based pricing

Customer Profitability

Decoy Pricing

Deferrred Revenue

Discount Management

Dual Pricing

Dunning

Dynamic Pricing

Dynamic Pricing Optimization

E-invoicing

Embedded Finance

Enterprise Resource Planning (ERP)

Entitlements

Feature-Based Pricing

Flat Rate Pricing

Freemium Model

Grandfathering

Guided Sales

High-Low Pricing

Hybrid Pricing Models

IFRS 15

Intelligent Pricing

Lifecycle Pricing

Loss Leader Pricing

Margin Leakage

Margin Management

Margin Pricing

Marginal Cost Pricing

Market Based Pricing

Metering

Minimum Commit

Minimum Invoice

Multi-currency Billing

Multi-entity Billing

Odd-Even Pricing

Omnichannel Pricing

Outcome Based Pricing

Overage Charges

Pay What You Want Pricing

Payment Gateway

Payment Processing

Penetration Pricing

PISP

Predictive Pricing

Price Benchmarking

Price Configuration

Price Elasticity

Price Estimation

Pricing Analytics

Pricing Bundles

Pricing Engine

Proration

PSP

Quote-to-Cash

Quoting

Ramp Up Periods

Recurring Payments

Region Based Pricing

Revenue Analytics

Revenue Backlog

Revenue Forecasting

Revenue Leakage

Revenue Optimization

SaaS Billing

Sales Enablement

Sales Optimization

Sales Prediction Analysis

Seat-based Pricing

Self Billing

Smart Metering

Stairstep Pricing

Sticky Stairstep Pricing

Subscription Management

Tiered Pricing

Tiered Usage-based Pricing

Time Based Pricing

Top Tiered Pricing

Total Contract Value

Transaction Monitoring

Usage Metering

Usage-based Pricing

Value Based Pricing

Volume Commitments

Volume Discounts

Yield Optimization

Why Solvimon

Helping businesses reach the next level

The Solvimon platform is extremely flexible allowing us to bill the most tailored enterprise deals automatically.

Ciaran O'Kane

Head of Finance

Solvimon is not only building the most flexible billing platform in the space but also a truly global platform.

Juan Pablo Ortega

CEO

I was skeptical if there was any solution out there that could relieve the team from an eternity of manual billing. Solvimon impressed me with their flexibility and user-friendliness.

János Mátyásfalvi

CFO

Working with Solvimon is a different experience than working with other vendors. Not only because of the product they offer, but also because of their very senior team that knows what they are talking about.

Steven Burgemeister

Product Lead, Billing